Now more than ever, the best graphics cards aren’t defined by their raw performance alone — they’re defined by their features. Nvidia has set the stage with DLSS, which now encompasses upscaling, frame generation, and a ray tracing denoiser, and AMD is hot on Nvidia’s heels with FSR 3. But what will define the next generation of graphics cards?

It’s no secret that features like DLSS 3 and FSR 3 are a key factor when buying a graphics card in 2024, and I suspect AMD and Nvidia are privy to that trend. We already have a taste of what could come in the next generation of GPUs from Nvidia, AMD, and even Intel, and it could make a big difference in PC gaming. It’s called neural texture compression.

Let’s start with texture compression

Before we can get to neural texture compression, we have to talk about what texture compression is in the first place. Like any data compression, texture compression reduces the size of textures by compressing the data, but it has a few unique elements compared to, for example, an image compression technique like JPEG. Texture compression trades visual quality for speed, while static compression techniques often optimize for quality over speed.

This is important because game textures stay compressed until they’re rendered. They’re compressed in storage, compressed in memory and VRAM, and only decompressed when they’re actually rendered. Texture compression also needs to be optimized for random access, with rendering tapping different parts of the memory depending on the textures it needs at the time.

That’s done with block compression today, which basically takes a 4×4 block of pixels and encodes them down, hence the “block” name. Block compression has been around for decades. There are different formats — as well as techniques like Adaptive Scalable Texture Compression (ASTC) for mobile devices — but the core concept has stayed the same.

Here’s the issue — textures aren’t getting any smaller. Highly detailed game worlds call for highly detailed textures, putting more strain on your hardware to decode those textures, as well as on your memory and VRAM. We’ve seen higher memory requirements for games like Returnal and Hogwarts Legacy, and we’ve seen 8GB graphics cards struggle to keep up in games like Halo Infinite and Redfall. There’s also supercompression with tools like Oodle Texture — don’t confuse that with data compression via tools like Oodle Kraken — which compresses the already compressed textures for smaller download sizes. That needs to be decompressed by the CPU, putting more strain on your hardware.

The solution seems to be to throw AI at the problem, which is something Nvidia and AMD are both exploring right now, and it just might be the reason you buy a new graphics card.

The neural difference

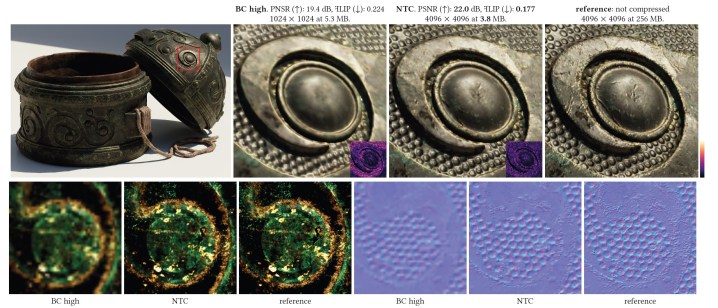

In August last year, Nvidia introduced Neural Texture Compression (NTC) at Siggraph. The technique is able to store 16 times as many texels as typical block compression, resulting in a texture that’s four times larger in resolution. That’s not impressive on its own, but this part is: “Our method allows for on-demand, real-time decompression with random access similar to block texture compression on GPUs.”

NTC uses a small neural network to decompress these textures directly on the GPU, and in a time window that’s competitive with block compression. As the abstract says, “this extends our compression benefits all the way from disk storage to memory.”

Nvidia isn’t the only one. AMD just revealed that it will discuss neural block texture compression at this year’s Siggraph with a research paper of its own. Intel has addressed the problem, too, specifically calling out VRAM limitations when it introduced an AI-driven level of detail (LoD) technique for 3D objects.

Although these are just research papers, they’re all getting at neural rendering. Given how AI is sweeping the world of computing, it’s hardly surprising that AMD, Nvidia, and Intel are all looking for the next frontier in neural rendering. If you need more convincing, here’s what Nvidia CEO Jensen Huang had to say on the matter in a recent Q&A: “AI for gaming — we already use it for neural graphics, and we can generate pixels based off of few input pixels. We also generate frames between frames — not interpolation, but generation. In the future we’ll even generate textures and objects, and the objects can be of lower quality and we can make them look better.”

A rising tide

At the moment, it’s impossible to say how neural texture compression will show up. It could be relegated to middleware, stuffed into a logo as your start up your game, and never given a second thought. It might never manifest as a feature that shows up in games, especially if there’s a better use for it elsewhere. Or it could be one of the key features that stands out in the next generation of graphics cards.

I’m not saying it will be, but clearly AMD, Nvidia, and Intel all recognize something here. There’s some balance between install size, memory demands, and the final quality of textures in a game, and neural texture compression seems like the key to give developers more room to play with. Maybe that leads to more-detailed worlds, or maybe there’s a slight bump in detail with much less demand on memory. That’s up to developers to balance.

There’s a clear benefit, but the requirements remain a mystery. So far, AMD hasn’t presented its research, and Nvidia’s research is based on the performance of an RTX 4090. In an ideal world, neural texture compression — or more accurately, neural decompression — would be a developer-facing feature that works on a wide range of hardware. If it’s as significant as some of these research papers suggest, though, it might be the next frontier for PC gaming.

I suspect this isn’t the last we’ve heard of it, at least. We’re standing on the edge of a new generation of graphics cards, from Nvidia’s RTX 50-series to AMD’s RX 8000 GPUs to Intel Battlemage. As we start to learn about these GPUs, I have a hard time imagining neural texture compression won’t be part of the conversation.